Got to say, it’s very sporting of AWS to make compliance with their terms of service and acceptable use policy optional.

Year: 2026

Hackers Simply Asked Meta AI to Give Them Access to High-Profile Instagram Accounts. It Worked

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

Hackers say that they used Meta’s AI support chatbot to break into a host of high-profile Instagram profiles by asking the support bot to change the email address associated with the target account. The claims coincide with a series of high-profile Instagram account takeovers, including the Barack Obama White House account, the Chief Master Sergeant of Space Force’s account, and Sephora’s account.

…

Well this is unsurprising and unshocking. Turns out that if you give your chatbot help interface unrestricted access to your backend systems – rather than, say, the access level of the human talking to it – then obviously hackers are going to try to jailbreak it in ways that you can’t possibly predict or guardrails against and, if/when they succeed, they’ll break into all the systems to which you’ve given the system access.

This shouldn’t even have to be said. Meta’s mistake here is so self-evident that they should be embarrassed.

Sending a test email from WordPress/ClassicPress using WP-CLI

Note to self: ignore search results that say to install a plugin; the absolute fastest way to send a test email from a WordPress/ClassicPress installation (assuming you’re using WP-CLI) is just to run something like:

wp eval 'wp_mail("recipient@example.com", "Test Email", "A test email from WP-CLI");'

The “ChangeNames.co.uk” Scam

👋 Hi! If you came here after going to ChangeNames.co.uk, congratulations: you just dodged getting scammed.

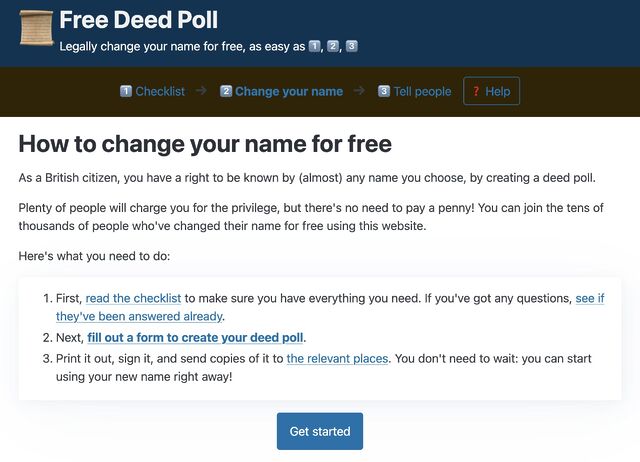

To actually change your name for free as a British citizen, without giving your personal information to scammers (or anybody else who doesn’t need it!), I suggest you use FreeDeedPoll.org.uk. Want an alternative? DeedPoll.lgbt is good too!

I help people change their names

As a British citizen, you can change your name for free. That’s the entire premise behind my website FreeDeedPoll.org.uk, which since 2011 has helped thousands of people change their names1 for free and without a solicitor.

I aim to run the most-ethical service of its type:

- As noted, it’s completely free and collects no personal information whatsoever.

- It’s funded out of my own pocket so it doesn’t need to depend upon advertising.

- It’s open source so anybody can inspect my code, or run it themselves, or even set up a “competing” copy (so long as they give away the code to that, too)!

- I try to answer every email I receive from anybody who’s having difficulty with the process.2

Scammers will barely help you, but they will steal your data

Others, however, don’t.

I’m not talking about all the paid-for services. Some of them provide a useful service, albeit one that you don’t strictly need to pay for. I’m not a fan of those that try to market themselves as “official”, though, because that just feels like fraud. No, I’m talking about a level of sliminess that goes well beyond merely charging somebody for something they’re entitled to for free.

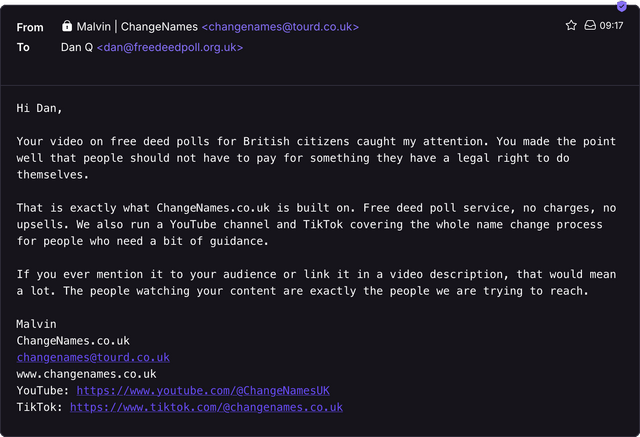

Like… let me show you an email I received today:

I tried to visit their website but it looks like they haven’t even bought the domain name they’re advertising, yet. Just for fun, I’ve registered it and set it up as a permanent redirect to this blog post3.

Their TikTok channel exists, but it’s not at the URL they provided. So far, so incompetent.

![Screengrab from a YouTube video showing a white woman with brown-and-red hair saying "please see the FAQs for any questions you have have around deed polls[sic] and the rules." alongside a logo for "Change Names".](https://bcdn.danq.me/_q23u/2026/06/changenames-youtube-video-640x360.png)

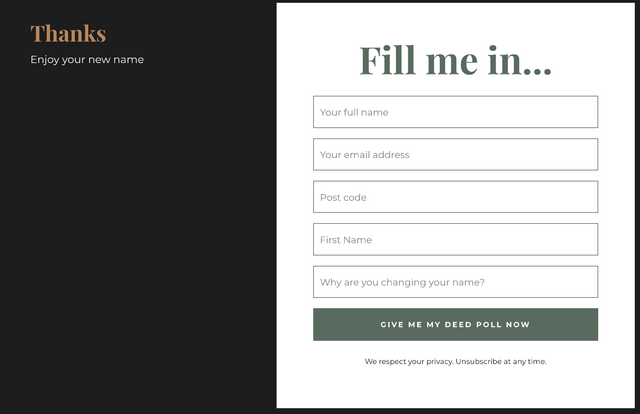

Both their YouTube and TikTok channels provide a link not to their “website” but to a kit.com page that asks for some personal details with the promise of a deed poll at the end of it.

When you fill in the form – and obviously you shouldn’t do so using real information – you get added to a marketing email list and a handful of other mailing lists get pushed at you.

Kit.com require double-opt-in confirmation for mailing lists, but the email tries to trick you into clicking the button, saying that clicking the “confirm your subscription” button “help us know you have received the deed poll and everything works”. In reality, they’re just trying to legitimise their spamming.

And what do you get out of it after all this? A hyperlink to a publicly-accessible Google Drive folder called “Deed Polls”[sic]4 that a more-ethical outlet could have just linked to in the first place. it contains a couple of Word documents that require you to delete a ton of underscores in order to type your own content in.

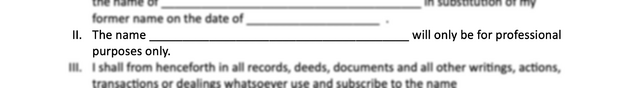

Oh, the the templates are full of mistakes. Here’s one (there are others!):

Of all the scammy free deeds poll services I’ve seen, ChangeNames is the worst

What we’ve got here is…

- a marketing scam pretending to be a deeds poll service,

- being run ineptly, e.g. marketing using a domain name they haven’t yet purchased and providing broken links to their own social media,

- that are using unethical techniques to harvest personal information,

- in exchange for a deed poll template that’s riddled with errors. 🤦

But the really insane thing about this whole scam is that a human being found my video about my own (superior, ethical) service FreeDeedPoll.org.uk… and then figured that they’d email me to see if I’d like to pass some traffic to their (inferior, unethical) competitor.

That bit… that’s the bit that blows my mind.

Footnotes

1 I can’t tell you exactly how many because I make a deliberate effort to collect no personal information, without which I’m unable to pin down a specific number. But I’ve had many hundreds of emails from people who’ve changed their names, and have anonymous statistics to suggest that the number is almost-certainly in the tens of thousands, maybe in the low hundreds of thousands.

2 I’m not a lawyer, but I’ve become pretty familiar with lots of relevant parts of the laws about not just names but adjacent areas like citizenship, residency, gender identity, information protection, and parental rights, and I’ve been able to point many people towards satisfactory conclusions when they’ve had more-challenging name changes.

3 It might not be working yet, depending on the state of DNS propagation, but it’ll get there in a day or so I reckon.

4 The plural of deed poll is, of course, deeds poll, but one could hardly expect these clowns to know that.

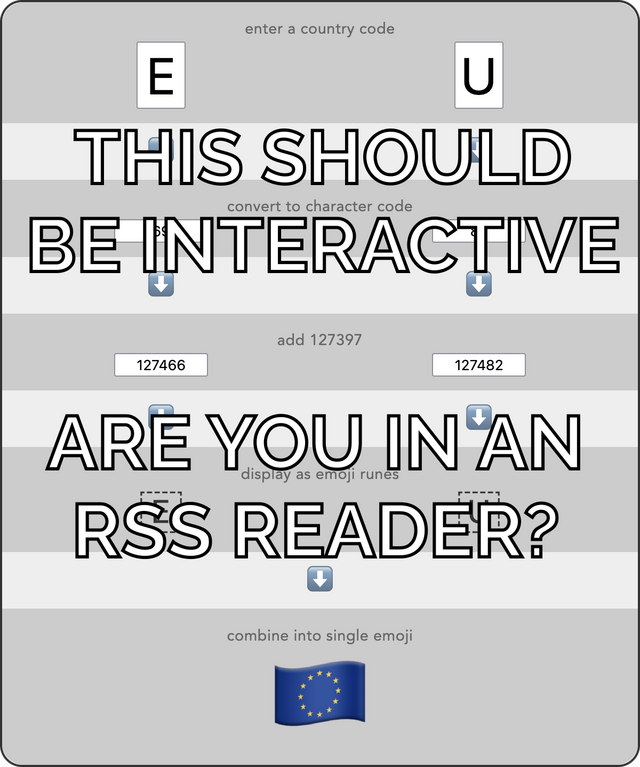

Converting ISO Country Codes to Flag Emojis

Today I learned something that is probably already well-known in some circles… but I hadn’t noticed it before and it made me go “wow”:

There’s a really simple algorithm for converting ISO 3166-1 alpha-2 country codes into the emoji representations of the flags of those countries.

I made an interactive to show how it works (enter a two-letter country code!). There’s a longer explanation below:

Here’s the essence of the algorithm:

- Take the two-letter country code, e.g. FR for France.

- Get the character code of the uppercase variant of each letter: so F becomes 70 and R becomes 82.1

- Add 127,397 to each of them, so now F is 127,467 and R 127,479.

- Render the unicode characters at those codepoints: F turns into 🇫 and R turns into 🇷.

- Concatenate those characters and you get the emoji of the flag: 🇫🇷

I’ve often find things that are wonderfully clever about Unicode, but this might be my new favourite.

func countryEmojiFlag(countryCode string) string { cc := strings.ToUpper(strings.TrimSpace(countryCode)) if len(cc) != 2 || cc[0] < 'A' || cc[0] > 'Z' || cc[1] < 'A' || cc[1] > 'Z' { return "" } return string([]rune{rune(cc[0]) + 127397, rune(cc[1]) + 127397}) }

Today was also the day that I discovered that while SU is a reserved 2-letter ISO 3166-1 designation for the Soviet Union, the flag of the USSR is not a registered emoji. But if it were, we can work out what codepoint it’d be at! So I can type this – 🇸🇺 – here, safe in the knowledge that if that emoji comes to exist in the future, then you’ll be able to revisit this blog post and see it!

You know what: there might be a game in these country codes and their flags somewhere. Like: a game where you have to get from one country to another: like, say, from the 🇨🇰 Cook Islands (CK) to 🇧🇯 Benin (BJ). But you’re only allowed to change one letter at a time and you have to land in a real country. I think the fastest route between those two takes three steps, e.g. 🇨🇰 Cook Islands (CK) to 🇹🇰 Tokelau (TK) to 🇹🇯 Tajikstan (TJ) to 🇧🇯 Benin (BJ)… It’s probably a bit easy though: I haven’t yet found any that require more than three moves and most can be done in just two.

It gets a lot harder if you require letters to only be changed to an adjacent letter, but this variant makes some permutations impossible. Maybe there’s an optimisation puzzle in the style of the Travelling Salesman problem? Or maybe by mixing in geographical restrictions such as an inability to visit a certain continent that would make it more challenging and fun? Just brainstorming here…

Footnotes

1 An alternative way of thinking about it is that you’re taking the number of the letter in the alphabet – e.g F=6, R=18 – and adding 64 to each. Here’s why, and why it’s beautiful.

2 I don’t get to write Go often, and I seem to get rusty at it quickly, but I enjoy the feeling of writing something so raw and yet so clean.

Ground White Pepper

There are many things I don’t like about the kitchen in the Chicory House where we’re living medium-term following our house flood.

But I like the fact that the integrated spice rack makes it much easier to see where we perhaps have a very-specific blind spot for “buying a new one where the last one’s still more than half-full”.

Wikipedia @ 25: Surface plasmon resonance

I think I’m probably done with my blog (and podcast) series of Wikpedia @ 25 posts. It’s been a surprising amount of work.

But don’t think I’ve stopped hitting Random Article! Today I was reading about surface plasmon resonance, and, despite looking at it on and off all day… I still don’t think I “get” it. I’ve even dived into the linked articles to try to get a background understanding of the topics around it, but… nope. It’s still all gibberish to me!

Think I need the ELI5 version!

Disabling AI in WordPress 7.0

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

…

Because I have access to wp-config.php, I added the following to my file:

define( 'WP_AI_SUPPORT', false );…

A useful tip.

Personally, I’ve got what feels like an even-better approach (for me, at least) I switched to ClassicPress a year and a bit ago, and haven’t looked back! It’s a stripped-down fork of WordPress with no Gutenberg, lighter JavaScript, and a handful of other features… plus ClassicPress is already AI-free and staying that way.

This isn’t to say that you can’t use AI with ClassicPress. Just that you’re not having to install the feature if you’re never going to use it. With WordPress’s good plugin architecture it seems strange to me that such divisive features would become part of the core product, but that just seems to be the direction that the project’s been going in for a while now.

Bringing Three Rings volunteers together: doing remote-first in person, and what to eat in a crisis

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

…

Three Rings CIC is, and always has been, a fully-remote organisation. We were doing remote working almost two decades before the Pandemic made it cool (and well before tools like Slack and Zoom were a thing: we cut our remote-first teeth using IRC as our collaboration tool!), but, there are still sometimes occasions when it’s good to have as many people as possible physically in a room.

When, last year, the Nightline Association announced it was closing down, it put one of their key services, Nightline Portal, which helps Nightlines to take and handle calls these days, in serious risk: someone had to host and maintain it, and that had always been the Association. At the point the announcement was made, in February, the Portal team had about four months to find it a new home.

It took me some degree of back-and-forth with the Nightline Association on one side, and it required some careful governance and planning at our end (as well as a few shifts in short-term priorities!), but – helped by the fact we all wanted the best possible outcome for Nightlines – we got an agreement in place, a budget plan agreed, and were able to ensure Portal would keep going, for free faster than I think anyone had expected.

That mattered to Nightlines, because to them, it’s critical infrastructure. And it mattered to us, because Nightlines were where Three Rings began, back in 2002. Today, we support everything from major national charities to tiny community shops, but Nightlines remain close to our heart. Almost all our team – across a wide range of “x decades ago”! – started as Nightline volunteers; we’ve nearly all spent the night awake, quietly waiting out the small hours, in case one of our fellow students needs someone to talk to in a crisis and offering a listening ear when they called. We weren’t going to let that community lose something it relied on.

But adopting Portal meant a lot of work, against the clock. Data validation, new agreements, rebudgeting, and, once that was all done, a full migration to shift Portal from the Nightline Association’s server infrastructure to ours. So to get that done, we organised an in-person meetup, “Portal Camp,” in a reasonably central hotel. Volunteers gave up their weekend, left their homes on Friday evening for two more days of work, and we brought everyone together. We spent Saturday morning planning, carrying out test migrations, preparing comms, and agreed yes – we can go.

…

About a year ago I helped look after the technical side of the “lifeboating” of Portal into Three Rings, right through the point that everything went wrong and my developers almost missed dinner (and, indeed, had to eat at their laptops!). I mentioned at the time my awe and pride of them, but JTA’s post goes deeper and further and hints at the (much bigger) structural and procedural changes that were needed to adopt Portal.

A great thing about volunteering with Three Rings is that we get to ask, on any given day “how can we do the most good?” Not “will this give value to shareholders?” Not “what’s the marketing strategy for this?” Not “can this deliver return on investment?” Those are questions for a very different kind of organisation to us. We get to ask, each and every day, “how can we do the most good?”

That question is why, for me, adopting Portal into the Three Rings family, last year, was a no-brainer. Dozens of voluntary organisations depended upon it, and we had the skills and volunteers and technical infrastructure to stop it from dying.

Anyway: JTA’s post on LinkedIn is better, and more-interesting, and somehow also funnier than mine, so go read that. And if you want to talk volunteering with me, I’d love to chat!

Is AI Profitable Yet?

This is a repost promoting content originally published elsewhere. See more things Dan's reposted.

No surprises here, but it’s interesting/staggering to see quite how large the disparity between spending and profit is for some of these companies.

I enjoy the fact that there’s a real-time ticker on the site so you can watch Amazon (for example) burn five thousand dollars a second.

When I tell people that generative AI, as it’s currently used, is unsustainable, this is what I’m talking about. Unless there’s a quantum leap in AI efficiency (for which I’ve seen no evidence of the feasibility) or a dramatic increase in the charged cost of LLM services (on the order of a tenfold increase assuming the increased cost does not drive any customers away; more if it does), this whole thing looks like a house of cards.

Wikipedia @ 25: Carl Person

Podcast Version

This post is also available as a podcast. Listen here, download for later, or subscribe wherever you consume podcasts.

To celebrate the site’s 25th birthday this year, Wikipedia is encouraging/challenging people to read one Wikipedia article a day for 25 consecutive days. I felt that I could do one better than that: not only reading an article but – where I found one that was particularly interesting – to write a blog post or record a podcast episode for each of those days, sharing what I learned. For each entry, I’ll hit “random article” a few times until something catches my interest, start reading, and then start writing! Everything I’ve written below came from Wikipedia… so you should check other sources before you use it to do your homework. Happy birthday, Wikipedia!

Today’s random article: Carl Person

Today’s topic: Carl Person

Just sometimes when you’re playing the “hey, Wikipedia, give me a random page” game, you get a hole in one. That’s what happened today when I landed on the article for… Carl Person.

Yes, Person is his actual surname. Speaking as a person with a stupid name, it pleases me to find people whose names probably cause them at least as much trouble as mine does. Wikipedia wasn’t any help at understanding where the surname Person comes from (and Carl himself isn’t even noteworthy enough to appear on the list of “notable people with that surname”, it seems).

However I did enjoy discovering jazz saxophonist Houston Person (which sounds like the beginning of a news headline about somebody from Houston!) who once released an album called… Person to Person! Excellent. Also, actress and filmmaker Marina Person whose documentary about her father, filmmaker Luis Sérgio Person, was titled simply Person. I think the name might be related to Swedish surname Persson – literally, “son of Per” – where Per is a Scandinavian variant of Peter. This probably means that there’s a “Per Person” somewhere in the world, and I want to meet him.

Anyway: back to Carl. He trained as a lawyer and spent the 1960s working in a variety of corporate law firms. These included the one for which Richard Nixon was a partner, during that period after Nixon failed to get elected as Governor of California and announced that he was retiring from politics… only to come back six years later to be elected president and, well, you know the rest.

The interesting bits of Carl’s career came later.

After the American Bar Association endorsed the concept of a paralegal in 1967, Person founded the Paralegal Institute, a name that’s so-polluted with people using it that even the closest-named Wikipedia article seems to be talking about something similar… but different. (This seems to be pretty much par for the course in the American paralegal system, though: did you know that a “certified paralegal” and a “certificated paralegal” are two completely distinct and non-interchangeable things?)

Anyway: other things he did as part of his legal career were –

- Represented other members of The Teenagers (then The Premiers, because confusingly the band changed their name to “The Teenagers” when they got older) in their efforts to reclaim shared copyright of their 1956 hit Why Do Fools Fall in Love from lead singer Frankie Lymon and Gee Records.

- Represented playwright Mark Dunn in his successful claim that The Truman Show was based upon his 1992 play, Frank’s Life, whose script he’d previously attempted to sell to Paramount.

- Helped Ralph Anspach (whose book I read before writing this 2013 blog post!) in his appeal against a ruling that Anspach’s board game Anti-Monopoly was derivative of Parker Brothers‘ stake in Monopoly: the appeal was successful at least in part because Person and Anspach were able to prove that Monopoly was, itself, derived from Lizzie Magie‘s The Landlord’s Game. (Fun fact: this was the second time Carl successfully took on Parker Brothers; the first being the Masterpiece case, representing Christian Thee!)

In 2012 Person put himself forward to be the Libertarian candidate for the presidential election, losing out to Gary Johnson (who had in turn switched sides after he realised he wasn’t going to become the Republican nominee). Gary Johnson eventually got 0.99% of the popular vote, almost breaking the 1% barrier that only 33 third-party candidates have ever achieved in US history.

Not a bad bit of reading for a hole-in-one article.

Twenty Inches

My Biggest Fan

Pied Wagtail and Coffee

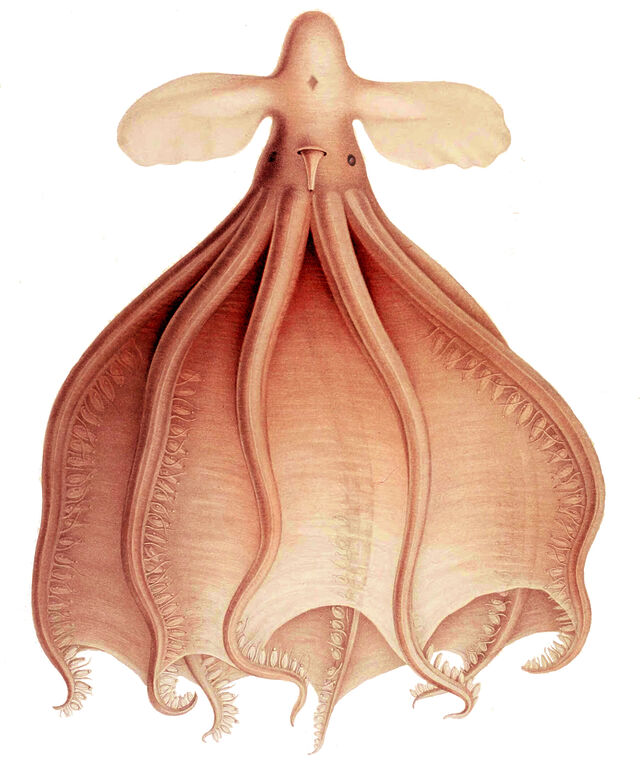

Wikipedia @ 25: Cirrothauma Murrayi

Podcast Version

This post is also available as a podcast. Listen here, download for later, or subscribe wherever you consume podcasts.

To celebrate the site’s 25th birthday this year, Wikipedia is encouraging/challenging people to read one Wikipedia article a day for 25 consecutive days. I felt that I could do one better than that: not only reading an article but – where I found one that was particularly interesting – to write a blog post or record a podcast episode for each of those days, sharing what I learned. For each entry, I’ll hit “random article” a few times until something catches my interest, start reading, and then start writing! Everything I’ve written below came from Wikipedia… so you should check other sources before you use it to do your homework. Happy birthday, Wikipedia!

Today’s random article: Cirrothauma

Today’s topic: Cirrothauma murrayi

My random landing page today is a genus for which there’s only a single species, so I hopped over to that species’ page.

And what a species!

This is the blind cirrate octopus (cirrothauma murrayi), a species found beneath the oceans all around the world but at such a depth that they’re not well-understood. We’re not even sure whether the specimens we’ve studied represent a single species or two separate species!

The Latin name comes from oceanographer John Murray, best known for his Challenger Expedition from 1872–1876, but whose four month North Atlantic Oceanographic Expedition in 1910 – which he self-funded – was the first to find this unusual species. It was described by Carl Chun, whose previous claim to fame had been the discovery of the (also amazingly alien-looking) vampire squid, seven years earlier.

(The vampire squid is its own amazing thing: did you know that it turns itself inside out to evade predators, exposing the inner surface of its spiked tentacles? Also it can spit glow-in-the-dark mucus to dazzle an attacker.)

You can tell it’s a cirrate octopus by those fins on its head. Cirrates are one of the two major families of octopodes: they’re the ones that do have a pair of mini strands dangling off each sucker on each tentacle, but don’t have an ink sac. They’re also notoriously fragile, and when we’ve pulled them up for research purposes they’re often in poor condition by the time they’re on the surface… and that’s especially true for deep dwellers like the blind cirrate octopus.

As for blind: well – it’s got eyes… but those eyes don’t have lenses. As a result, they’re probably able to tell light from dark but probably can’t make out the particular shapes of objects. (This is a great example, contrary to claims of irreducible complexity in the eye by proponents of “intelligent design” of an eye with only some of the components that seem essential to a fully-functional organ that still provides value for its host!).

Speaking of which – do you know how cool the eyes of an octopus are?

- Like all cephalopods, they have no blind spot because their retina is in front of the nerve fibres instead of behind them.

- Like squid and possibly cuttlefish, they can differentiate the polarisation of light. (I believe that sheep and goats can, too!)

- Their pupils automatically rotate to stay horizontal, no matter which way up they are!

There’s some debate about whether or not octopodes and other cephalopods’ eyes evolved from a shared ancestor or are an example of convergent evolution, and the arguments for both are really interesting.

Of course, our friend the blind cirrate octopus is, umm… mostly blind. Very different from other octopodes.

As I said, we know so little about it! We don’t know what it eats (we think it probably eats whole shellfish). We don’t know how it breeds. We don’t know how commonplace it is or whether its environment is under threat.

But what we do know is that it’s a freaky-looking thing from way down deep. Thanks, Wikipedia, for telling me about this strange beast. Let’s see what you have to share with me tomorrow!